The beginnings 🔗

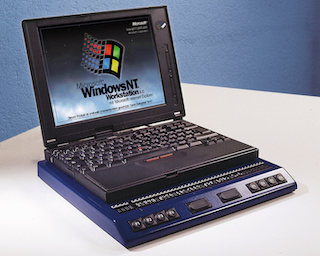

I had such an experience in the autumn of 1995. I visited a small exhibition of assistive technology products for the blind, which took place at the Hamburg-Farmsen Vocational Promotion Agency. At that time I had just started my studies in business informatics at the University of Applied Sciences Wedel and needed a notebook with a refreshable Braille display. Up to that point, I had already visited some exhibitions and Hamburg-based representatives of assistive technology vendors, but by then I had not found anything that I had been really convinced of. Either there was no mobile solution at all, or the systems on offer were so unwieldy that they were simply too heavy to carry around. I had to go by public transit for an hour each direction, from the place where I lived to the place I was studying at. Weight was therefore a key factor for me.

I had heard that Help Tech, which at that time was still called Handy Tech, would be present at this exhibition. Handy Tech had just emerged from another company called Blista-EHG a year earlier. In addition, the company had no representative in Hamburg, so I had not yet been able to try their products. According to the announcement, they would exhibit their new innovative line of Braille displays, combined with a first version of the screen reader JAWS for Windows. That definitely piqued my curiosity.

I arrived at the exhibition place and found the exhibit hall right away. An employee showed me the table where Handy Tech had set up a Braille display connected to a desktop computer. The Handy Tech representative was in conversation with another interested party at that moment. I just sat in front of the display and started exploring what was in front of me.

And what can I say? It was love on first touch, so to speak! The Braille display that stood in front of me was a model called "Braille Window Modular 84". Unlike all the other displays I had looked at, it had no flat, but cells that were curved inwards, with an upward tilt towards the user. These felt very gentle under my fingertips. The hand automatically fell into a slightly curved posture, similar to what is ideal for pianists to play the most relaxed. The dot caps were pleasantly round, and the fingers glided over the displayed information effortlessly in this natural hand posture.

The keyboard mounted on the display had clearly noticeable dots on the common keys, so that orientation was a breeze. The PC was open to MS Word in a document in which someone had written the mandatory "This is a test". I started writing something into the document to see how the keyboard felt, then opened the menu bar using the Alt key, then flipped through other applications and the program manager of Windows 3.11 with Alt-Tab. Surprised, I found that I was just using Windows all the time, and the Braille display always showed me the current location. I didn't have to learn awkwardly complicated keyboard shortcuts. In contrast to many other screen reader experiments at the time JAWS followed a different philosophy. Right from the start, JAWS had the aim of not standing in the way of the user, but to put the usage of Windows and its programs at the forefront.

At that moment I knew I wanted this system for my studies. The Handy Tech employee had since ended his conversation and turned to me. He was blind himself, which pleased me very much. This meant that he would probably be able to answer some more in-depth questions, which had caused stuttering, long faces, and other signs of lack of knowledge in mostly sighted representatives from other AT vendors before.

The conversation was indeed very constructive, informative and illuminating. We discussed my requirements, he noted down my details, and a few weeks later, they made me an offer. At that time, however, I needed the mobile version of the system, i.e. the little sibling display "Modular 44". Using an auxiliary construction, I could connect this display to a suitable notebook in a way that it formed a stable unit. Just as easily, however, the notebook could be undocked, and the keyboard and the corresponding number pad could then be clipped on. I could then use the display comfortably with the stationary PC at home.

Just in time for the 2nd semester at the beginning of 1996, I had mastered the administrative procedures and received the system along with my first JAWS version. A few weeks later I programmed my first Windows application in Borland Delphi. I reported on my experience in the Henter-Joyce Forum on CompuServe, and how I managed to make the development environment itself more accessible using configuration adjustments and JAWS macros.

In July, I received a surprising call that I was being invited to a workshop, which would take place in a lovely remote hotel somewhere in the Black Forest, in the south-west of Germany. This workshop was jointly hosted by Handy Tech and the European importer of JAWS. During the first day, I spontaneously took over the guidance of the Macro Beginner group because I was already familiar with macro programming. In August, in parallel with my studies, I started working as a translator for JAWS. Together with another colleague, I translated the user interface, macros and help into German. The rest, as they say, is history.

In conclusion, this contact with Handy Tech not only got me my first Braille display, but also my first job. I worked at Freedom Scientific, makers of JAWS, until 2007.

The Bookworm — A companion on many long train journeys and flights 🔗

Through my work at the JAWS vendor, I also made my first appearance at Reha (later RehaCare) trade show in Düsseldorf in 1997. Handy Tech had announced a world premier for this occasion: A Braille reader for the trouser or vest pocket. I had always been a passionate reader of Braille books, so I was naturally very curious. I went to Düsseldorf and experienced a real surprise.

When Sigi Kipke, the managing director, showed me the bookworm for the first time, it was like a revelation. The bookworm was a box with eight concave Braille modules that I had come to love and rely on heavily on my Braille display. The box was as wide as one hand's spread, Right and left buttons were inserted to the left and right of the modules to scroll forward and backward, and above were an Escape and Enter key. The modules were protected by a movable lid. The battery-powered device switched on the moment the lid was pulled back to reveal the Braille cells. The case almost snuggled into the palm of my hand. One used the index finger to read the displayed information. You could scroll back or forward with the other hand or leave the scrolling to the auto-advance feature, which was incorporated into the software and which you could adjust quite well to your reading speed.

The data was uploaded to the bookworm from an MS-DOS-based software called BWCom. This was later replaced by the Windows version HTCom, which is still part of the Handy Tech Braille systems until now. The software converted TXT or HTML files and translated them into Grade 2 if desired. The resulting files had not only paragraphs, but also a heading structure. The bookworm actually had a multi-level heading navigation facility, making scrolling even in large files easy.

Unfortunately, this heading navigation was lost in their later Braille notetakers, so you have to make do with the search function of the editor to find the next heading. But it was precisely this heading navigation that showed how much the bookworm was ahead of its time. Screen readers received the ability to navigate by headings on websites only two to three years later. And the development of the digital audiobook format DAISY, which also has the ability to navigate by different heading levels, was still in its early iterations at the time.

Many criticized that eight Braille modules were too few to read sensibly and fluently. However, I found that the high level of mobility outweighed this argument, at least for me. In any case, by saving up, and receiving a subsidy from my parents as a gift, I made sure that I got one of the first market-ready Bookworm devices for my 25th birthday in April 1998. Over the course of the following years, on many long transit and train journeys as well as long-haul flights, it kept me good company and helped kill time.

Figure 1: Bookworm on a standing table at a café (Source: Help Tech)

Figure 1: Bookworm on a standing table at a café (Source: Help Tech)

In 2000, a small German newspaper called Taz was one of the first newspapers in Germany to offer digital subscriptions, not only in the mostly inaccessible PDF format, but also in HTML and plain text formats. The HTML version was a bit too bloated to be easily consumed by the bookworm software. So, I wrote a small program in Delphi that converted the plain text version of an edition into a simple HTML framework so that BWCom could structure it and load it into the bookworm. I could then navigate from main to main part on the first, between departments on the second, and between articles at the third heading level. I very often took the daily edition of the Taz with me when going on longer trips or lying on the couch and browsed it like others could skim the daily newspaper. In the same year, Der Spiegel also offered its weekly edition in a format that BWCom could process and make accessible to the bookworm. Here, too, navigation at different heading levels was possible.

Even my first guide dog Falko liked the bookworm: One morning during the acclimatization phase, he grabbed it from the bedside table and chewed on it slightly. I had gone to take a shower and had carelessly left the bookworm lying there. Handy Tech kindly built a new, again hand-flattering, housing around the interior later.

Figure 2: The Bookworm (Source: Help Tech)

Figure 2: The Bookworm (Source: Help Tech)

The bookworm accompanied me for a long time until a few years ago, when it fell victim to a leaked battery, which irreparably damaged its electronic parts. Today, it found a worthy successor in an Actilino. I will write about this one in a later article. However, I still miss the heading navigation, and may I say, dearly.

Other developments and products 🔗

In 1999, my workplace, now converted into a full-time job, was upgraded with both a Braille Window Modular 84 and a notebook display called Braille Top. This was slightly smaller and more handy than the Modular 44 and accompanied me on many national and international trips over the next few years. It was the only Help Tech display I ever owned that had no concave modules.

Figure 3: The Braille Top with flat Braille cells clearly visible

Figure 3: The Braille Top with flat Braille cells clearly visible

In 2007, the displays were sold and found new homes with nice people, who certainly made good use of them for a few more years. I got both a Modular Evolution 88, the first display that had ATC technology, and a notebook display called Braille Star 40. The former now lives with a lady near Potsdam because I only work with notebooks nowadays and simply have no use for large desktop-line displays anymore. The Braille Star still works theoretically, but is now old and has age-related failure symptoms. I hope to replace it soon with its successor, the Active Star.

But even aside from the devices I used myself, I kept an eye on the development at Handy Tech, which was renamed Help Tech in 2018. For example, several times during visits or exhibitions, I played with the Braillino, the first 20-cell Braille display they made. At the time, the Braillino was pretty much tailored to the Nokia Communicator, one of the first accessible smartphones. It is the precursor of the already mentioned Actilino, which I use as a reader and for note-taking.

I also saw the Braille Wave first at the fair where it was debuting. It was released a year after the bookworm and was their first display with built-in smart functions. This included not only a notepad and calculator, but also a scheduler and other useful functions that could be used when away from a computer. The functions introduced at that time have been continuously developed further and are still part of many Braille systems from Help Tech. The key difference was that, unlike other notetakers, Help Tech's Braille Wave was Braille first, not Speech first with Braille developed as an add-on.

I even got a chance to test the successor to the Braille Wave, the Active Braille, for a few weeks, when hardly anyone knew yet that it had been released. I got to try the music Braille feature. This is a feature where one can enter musical notes in Braille and immediately hear the note played back. This feature is also available in Actilino and Active star.

In general, Actilino and its bigger sibling, Active Braille, are the most exciting Braille systems Help Tech is currently offering. This is because of their features and portability. Reading without ATC Is really starting to bother me when I am using other Braille displays. ATC means that the Braille display automatically recognizes the position of my finger. When it reaches the last displayed character, the display automatically pans to the next segment. This means that I'm no longer bound to a fixed time interval when reading one Braille display segment, as is the case in virtually all Braille auto-advance features in screen readers and was also the case in the Bookworm. If there is a term or name somewhere that I want or need to inspect more closely, I have all the time in the world. When I am ready to continue, I swipe to the end of the cells. ATC recognizes the movement of my finger and advances the display. I move my hand back to the left and continue reading. On the Actilino I can read for hours without getting tired. The natural hand posture, which I have already described above, does the rest.

Why Help Tech again and again? 🔗

Well, there are several reasons for this. It's not like I hadn't worked with other Braille displays, for a time, even for a living. I was using displays made by my former employer, such as the Focus or PAC Mate displays. So, I was in fact working a lot with displays that weren't from Handy Tech. This included noticing the differences during day-to-day productive use. But when I had the choice, I kept coming back to Handy Tech displays time and again.

On the one hand, it is not only the displays themselves that are really cleverly thought out. The Braille Top included a backpack with all sorts of useful compartments, which matched exactly the display and an accompanying notebook. The bookworm's carrying case contained a rain cape. You could pull a rain protection from the side pocket so that all surfaces were protected from water, including those parts of the Bookworm that were left exposed when it was in the case.

Ergonomics is unparalleled, not only because of the concave modules, but also because of the arrangement of the braille keys. The controls always have exactly the right pressure point-for my fingers. The housings are also very robust, due to their design, but still very pleasant to the touch. Nothing wobbles or slags at the buttons. And in my case, the concave modules are even a real health factor: I have found that when I work on a braille display with flat modules for longer, I get a pain in the finger joints and the palm of my hand when doing extensive reading or working with code. If I stop reading on such a display and return to one with concave modules, the pain disappears, and I can read for hours, as I already mentioned.

On the other hand, there are the features of the Braille systems themselves. They are simple enough for beginners, but also have features for real power users like me. We can squeeze a lot out of the editor or other parts. They even offer learning and training opportunities, for example via the drivers for JAWS or within Music Braille, especially when displays that have ATC are used. I will cover more details about that in the article about Actilino. And with every function, I have the feeling that it was carefully developed and actually designed by people who would use something like this themselves, from users for users.

I hope that Help Tech will remain true to this philosophy when developing future products as well. There are enough experiments to develop Braille notetakers with an Android base, which always feel rather half-baked or inconsistent to me, as if not all parts want to fit together. This is particularly noticeable when, in addition to the proprietary blindness-specific functions, there are parts of the operating system that use the screen reader integrated into Android and the BrailleBack component. The differences are often so profound that it almost bites one in the fingers. With Help Tech systems, everything feels like a very consistent and coherent piece, no matter what the task at hand may be.

Another point is the superb documentation. The manuals are extensive. I still vividly remember the two thick volumes for the DOS screen reader Braille Window Pro, which was included with my first Braille display. I think because I was a programmer myself, e.g. using Turbo Pascal for DOS, and other not every-day programs, I already got a lot out of this DOS screen reader. These manuals were definitely a great help.

Even for current braille systems, the manuals are very well-structured and describe all functions in detail without bloating the text unnecessarily. For manuals, this is almost a form of art, describing functions of a product exhaustively without getting into a shamble.

But if there is a problem, I haven't seen any other company that is so committed to the interests of its customers. There is no attempt to sugarcoat bugs, to sweep current problems under the carpet or to pass on the "guilt" of the malfunction to the user. The problem is methodically recorded, analyzed and then determined where it is stuck: on the hardware, the software or indeed a user error, or quite simply the fact that the user has tried something that no one has ever thought of. Or an opportunity for a new function is detected and then possibly even implemented in a later version of the device software. As a customer, I feel that I am being taken seriously and treated with honesty and respect. And there are companies, even in the assistive technology industry, where I have had to experience this not to be the case. This kind of thing creates lasting trust, and when trust is there, I like to come back as a customer.

And one more point is crucial: At Help Tech, blind and visually impaired people work on the company's products in various key areas (development, support, sales) that blind people are supposed to use afterwards. One simply notices. It makes a difference whether blindness-specific products are being developed for the blind by sighted people who believe they know what a blind user might need, or whether devices and functions are being developed by the blind for the blind.

Therefore, my choice will always fall on Help Tech products in the future. They are exactly what I need in the Braille space.

Disclaimer 🔗

I am not an employee of Help Tech and have never been one. I am just an avid customer who wrote an article on the occasion of the 25th anniversary of his first encounter with these extraordinary braille displays.

The pictures used in this article were thankfully provided to me by Help Tech and are for illustration purposes only.

]]>